Introduction

Phone calls remain the dominant channel for sales, support, and recruitment, yet most businesses still rely entirely on human agents to handle them. While 88% of customers use phone calls for customer service, traditional human-only teams face hard limits around availability, scale, and consistency that hiring alone can't fix. AI voice agents solve this by enabling intelligent systems capable of holding real, context-aware conversations over the phone 24/7, at scale.

The gap between a basic voice bot and a high-performing AI voice agent is significant. Traditional IVR systems force callers through rigid menu trees, while basic voice bots handle single tasks in isolation. True AI voice agents understand natural language, make autonomous decisions, and execute end-to-end workflows across CRM systems, calendars, and business tools with no human intervention required.

This guide walks through how to build one: the technology stack, the parameters that determine conversation quality, how to design effective conversation flows, and the pitfalls that kill performance before launch.

TL;DR

- AI voice agents combine STT, LLM, and TTS in a streaming pipeline to hold natural phone conversations not just read scripts

- Custom builds demand stack selection, conversation design, and latency tuning to stay under 700ms

- Barge-in handling, voice quality, and smart escalation logic determine whether callers stay or hang up

- Pre-built platforms deploy in under an hour vs. months for custom builds ideal when outcomes matter more than infrastructure

- Compliance (TCPA consent, GDPR disclosure, TRAI registration) must be built into the architecture from the start not added after launch

What Is an AI Voice Agent and How Does It Work?

An AI voice agent is a software system that listens to spoken input, understands intent, generates contextually appropriate responses, and speaks that response back in real time, without a human operator. This distinguishes it from traditional IVR systems (which force callers through rigid keypad menus) and text-only chatbots (which lack voice capabilities entirely).

The Three-Component Pipeline

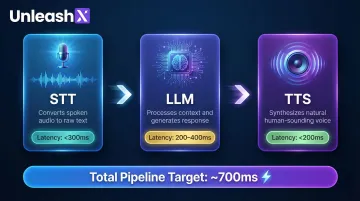

Every AI voice agent operates through a cascaded architecture connecting three specialized models:

- Speech-to-Text (STT) converts incoming audio into a text transcript. Systems like Deepgram Nova-3 and AssemblyAI Universal-3 Pro hit streaming latency under 300ms fast enough for genuine back-and-forth conversation.

- Large Language Model (LLM) interprets the transcript, identifies intent, and generates a response. Its Time-to-First-Token (TTFT) typically runs 200–400ms and is the single biggest lever for how natural the exchange feels.

- Text-to-Speech (TTS) renders the LLM's text output as audio. Models like ElevenLabs Flash and Deepgram Aura-2 achieve Time-to-First-Audio under 200ms, keeping pauses short enough that callers don't notice the handoff.

Latency accumulates at each stage. A pipeline clocking 300ms (STT) + 400ms (LLM) + 200ms (TTS) produces 900ms of total delay enough for callers to notice the pause and disengage.

That latency budget shapes every architectural decision you'll make. It also explains why use cases that demand both speed and scale are where these agents deliver the clearest advantage.

Real-World Use Cases

AI voice agents outperform traditional approaches wherever high volume, consistent availability, or multilingual reach is a constraint:

- Resolves inbound FAQs, account inquiries, and basic troubleshooting without escalation

- Runs outbound sales sequences qualification calls, demo scheduling, and follow-ups at any volume

- Manages appointment coordination end-to-end, including reminders and reschedules

- Screens inbound leads against defined criteria before routing to a human rep

- Conducts first-round HR interviews and advances qualified candidates automatically

What makes these use cases viable isn't just automation, it's the combination of 24/7 availability, support for 100+ languages, and the ability to run thousands of concurrent calls without performance degradation.

How to Build an AI Voice Agent: Step-by-Step

Architecture decisions made early directly determine performance and scalability later. Rushing through the first two steps is the most common reason voice agent projects stall or require expensive rebuilds.

Step 1: Define Your Use Case, Scope, and Requirements

Vague goals produce vague agents. Nail down the 3–5 core tasks your agent must accomplish and the failure conditions that should trigger human escalation.

Specify the agent's primary job:

- Inbound support handling account inquiries and troubleshooting

- Outbound sales qualifying leads and scheduling demos

- Lead qualification collecting requirements and routing to sales

- HR screening conducting initial candidate interviews

Define call volume requirements whether you're handling 100 calls per day or 10,000 per month determines your infrastructure needs and cost structure.

Identify required integrations upfront:

- CRM systems (Salesforce, HubSpot) for logging call outcomes and updating lead status

- Calendar tools (Calendly, Google Calendar) for scheduling appointments

- Ticketing platforms (Zendesk, Jira) for support workflows

- Databases the agent must read from or write to during calls

Missing these in planning leads to costly rework. Integration complexity often exceeds the voice pipeline itself.

Step 2: Choose Your Tech Stack

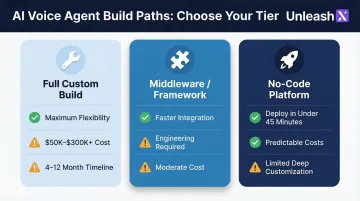

Three build-path options exist, each with distinct trade-offs:

Full custom build using raw APIs:

- STT: Deepgram, AssemblyAI, or OpenAI Whisper

- LLM: OpenAI GPT-4, Anthropic Claude, or open-source models

- TTS: ElevenLabs, Deepgram Aura, or Google Cloud TTS

- Telephony: Twilio, Plivo, or SIP trunking providers

- Trade-off: Maximum flexibility and control, but requires 4-12 months and $50,000-$300,000+ upfront investment

Voice AI framework or middleware:

- Platforms like LiveKit, Retell AI, or Vapi provide pre-integrated STT/LLM/TTS pipelines

- Trade-off: Faster than raw APIs, but still requires engineering expertise and ongoing maintenance

No-code/low-code platform:

- Bundled solutions like UnleashX deploy production-ready agents in under 45 minutes using pre-built templates

- Trade-off: Faster deployment and predictable costs, but some constraints on deep customization

Key selection criteria:

| Factor | Target | Why It Matters |

|---|---|---|

| Latency | <700ms round-trip | Delays over 500ms feel robotic; human turn-taking gaps average 200ms |

| Language support | Match your market | Indian markets need Hindi, Tamil, Bangla; global deployments need 100+ languages |

| Telephony compatibility | SIP/WebRTC vs. phone APIs | SIP offers lower per-minute costs; phone APIs (Twilio) offer faster setup |

| Compliance | TCPA, GDPR, TRAI | Legal requirements vary by region; non-compliance creates massive liability |

Step 3: Design the Conversation Flow and Agent Personality

A well-designed conversation flow covers every stage of the call from first word to final handoff:

- Opens with a greeting that sets tone and discloses AI identity where legally required

- Detects primary caller intent within the first 10 seconds

- Branches into distinct dialogue paths per scenario (billing inquiry vs. technical support)

- Fills required data slots like name, account number, date before moving forward

- Redirects off-topic input without sounding robotic or frustrated

- Transfers to a human agent cleanly when the AI reaches its defined limits

Writing effective system prompts:

The quality of your LLM prompt directly determines response quality. Four elements must be explicit in every prompt:

- Define a named persona with a consistent tone and language register (formal or conversational)

- Scope what the agent knows and where it defers to a human

- List prohibited statements pricing promises, legal advice, unauthorized claims

- Specify how to handle ambiguous input without frustrating the caller

Prompt refinement is ongoing. Real call transcripts will surface gaps that no amount of pre-launch testing can predict plan for weekly iteration in the first month.

Step 4: Build, Integrate, and Deploy

Technical setup:

- Connect STT and TTS APIs to your orchestration layer

- Wire the LLM into the pipeline with streaming enabled

- Configure telephony number (SIP trunk or phone number API like Twilio)

- Set up webhooks to push call data (transcripts, outcomes, collected fields) to CRM and downstream systems

Once the pipeline is connected, run through this checklist before going live:

- Test with simulated noisy audio environments (background noise, poor connections)

- Verify barge-in behavior agent should stop speaking immediately when user interrupts

- Confirm voicemail detection for outbound calls to avoid leaving messages mid-conversation

- Run compliance checks: consent disclosures, call recording notices, DNC scrubbing

- Stress-test with concurrent calls before going live to identify bottlenecks

Key Technical Parameters That Affect Voice Agent Performance

The difference between an AI voice agent that feels human and one that feels robotic usually comes down to four controllable parameters not just the LLM model used.

Latency Budget

Each pipeline stage consumes time. Research shows human conversational turn-taking gaps average just 200ms, and any delay over 500ms in an AI voice agent feels robotic and unnatural.

Industry best practice targets:

- STT partial response: <150ms

- LLM first-token response: <300ms

- TTS audio start: <200ms

- Total round-trip: <700ms

Exceeding these creates unnatural pauses that break conversational flow. Achieving sub-second latency requires abandoning batch processing in favor of fully streaming architectures and colocating media edge servers near users.

Barge-In and Turn-Taking

Barge-in (allowing the user to interrupt the agent mid-speech) is critical for natural conversation. Without it, callers feel trapped listening to long responses they don't need.

Voice Activity Detection (VAD) detects when a user starts speaking and should trigger the agent to pause or yield. Basic silence-based VAD frequently cuts off users who pause to think or false-triggers on background noise. Enterprise deployments must use semantic or model-driven turn detection to distinguish genuine interruptions from conversational backchannels like "uh-huh" or "okay."

On interruption, the system must execute an explicit TTS playback cancel, flush audio buffers, and abort the LLM stream immediately. Skip any of those steps and the agent keeps talking over the caller, producing a disorienting stutter effect.

Voice Quality and Naturalness

TTS voice selection, speech rate, and prosody (pauses, emphasis) directly shape perceived trustworthiness. Prosody remains a major factor in assessments of naturalness, with fundamental frequency patterns emerging as key differentiators between synthetic and human speech.

For multilingual or regional deployments especially markets like India accent-matched voices directly improve comprehension and caller trust. Users want AI systems that reflect their own linguistic backgrounds familiar accents build confidence while foreign or robotic ones cause friction.

For regional Indian markets, this means native-sounding support across:

- Hindi, Hinglish, and Marathi

- Tamil and Bengali

- Additional vernacular languages that match your caller base

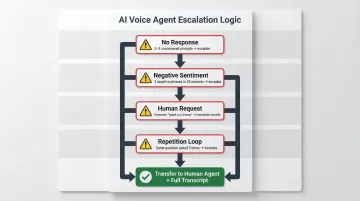

Escalation Logic

Hard-coded escalation triggers matter as much as conversational quality. A voice agent without good escalation logic erodes trust when it gets stuck in loops.

Effective escalation triggers:

- Transfer to a human if the caller fails to respond after 2-3 prompts

- Trigger immediate human intervention when two negative phrases appear within 30 seconds (e.g., "still not fixed" → "unacceptable")

- Escalate instantly no exceptions on "speak to a human," "transfer me," or "get me a person"

- Flag a repetition loop if the same question is asked three times; the AI is not answering it correctly

On escalation, pass the full conversation transcript including sentiment and intent signals to the live agent so the customer never has to repeat themselves.

Common Mistakes When Building AI Voice Agents

Skipping End-to-End Latency Testing Before Launch

Developers often test each component (STT, LLM, TTS) individually and miss cumulative latency. A pipeline where each part meets spec can still produce a 1.5-second round trip that makes the agent sound broken.

Always benchmark the full call loop under realistic conditions: concurrent calls, network jitter, and real telephony infrastructure. Vendor latency claims often reflect isolated model inference (for example, 75ms for TTS) but real-world "mouth-to-ear" latency includes network round-trips, audio player buffering, and orchestration overhead.

Over-Prompting or Under-Constraining the LLM

Agents given too much freedom hallucinate, go off-topic, or make unauthorized claims. Agents given too little context produce robotic, unhelpful responses.

The right balance requires:

- Define a clear persona with explicit role, tone, and topic scope

- Set hard guardrails specifying what the agent must never say or claim

- Implement slot-filling logic for structured data collection (names, dates, account numbers)

- Map escalation triggers for topics outside the agent's authorized scope

Ignoring Compliance Requirements at Build Time

Consent collection, call recording disclosures, DNC scrubbing for outbound, and data retention policies are legal obligations in most markets. Attempting to retrofit these after deployment is expensive and risky.

TCPA (US): The FCC explicitly outlawed AI-generated voices without prior express consent in February 2024. Non-compliance carries severe penalties, the FCC recently proposed a $6 million (approximately ₹49.8 crore) fine for illegal robocalls using AI voice cloning.

GDPR (EU): Article 50 of the EU AI Act mandates that natural persons must be informed they are interacting with an AI system at the time of the first interaction. Processing voice data for user identification constitutes special category data requiring explicit consent.

TRAI (India): The February 2025 TCCCPR Amendment requires commercial calls to originate from designated number series (140-series for promotional, 1600-series for transactional), with advance declaration of auto-dialer usage. Unregistered telemarketers face immediate suspension and financial penalties up to ₹50 lakhs per month.

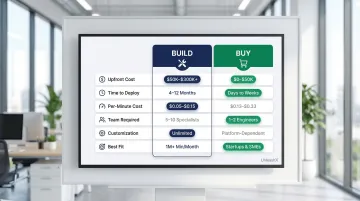

Build vs. Buy: When a Pre-Built Platform Makes More Sense

Building a voice agent from scratch makes sense when you need full architectural control, highly custom workflows, or deeply proprietary data pipelines. For most business teams, however, the engineering overhead competes directly with the business outcomes the agent is supposed to deliver.

When Pre-Built Platforms Win

Pre-built platforms are clearly better when:

- Deployment speed is critical days to market beats months of build time when opportunity is on the line

- Your team lacks voice AI engineering depth maintaining STT/LLM/TTS integrations, telephony, and compliance updates requires specialized skills most business teams don't have

- You need multi-language support immediately 100+ languages and regional accent coverage requires infrastructure investment that takes years to build internally

- The use case is already proven sales outreach, customer support, HR screening, and lead qualification all have established best practices on modern platforms

Platforms like UnleashX offer pre-built AI voice employees Peter for sales, James for recruitment that deploy in approximately 45 minutes with CRM integrations, 100+ language support, and built-in compliance monitoring. For teams prioritizing speed and results over infrastructure ownership, that's a meaningful difference.

Key Trade-Offs to Evaluate

| Factor | Build | Buy |

|---|---|---|

| Upfront cost | $50,000–$300,000+ | $0–$50,000 (usage-based) |

| Time to deploy | 4–12 months | Days to weeks |

| Per-minute cost (at scale) | $0.05–$0.15 | $0.13–$0.33 |

| Engineering team required | 5–10 specialists | 1–2 integration engineers |

| Customization ceiling | Unlimited | Platform-dependent |

| Best fit | >1M minutes/month, highly regulated industries | Startups, SMEs, rapid deployment needs |

Custom builds only make financial sense for enterprises processing over 1 million minutes per month, where the per-minute API cost differential generates measurable savings. Below that threshold, a platform delivers faster results at lower total cost.

Frequently Asked Questions

Is using AI voice illegal?

AI voice agents are legal in most markets but subject to specific regulations: consent requirements before recording calls, disclosure obligations (informing callers they are speaking to an AI), and outbound call restrictions under TCPA (US), GDPR (EU), and TRAI (India). Non-compliance not the technology itself creates legal risk.

What are the 7 types of AI agents?

AI agents are categorized by decision-making architecture: simple reflex, model-based reflex, goal-based, utility-based, learning, hierarchical, and multi-agent systems. Voice agents typically fall into goal-based or utility-based categories, pursuing defined objectives like scheduling an appointment or qualifying a lead within a conversation.

What is the difference between an AI voice agent and a traditional IVR?

Traditional IVR forces callers to navigate rigid pre-programmed menus using keypad inputs, while an AI voice agent understands natural spoken language, handles open-ended questions, and adapts responses based on context. The result is a conversation, not a menu.

How do AI voice agents handle Hindi and regional languages for Indian customers?

Modern voice AI platforms support natural conversations in Hindi, Tamil, Telugu, Kannada, Marathi, Bengali, and code-mixed Hinglish. UnleashX voice agents detect caller language automatically, switch mid-call where needed, and integrate with WhatsApp for follow-ups, which matches how Indian buyers in BFSI, real estate, and D2C actually engage.

Which Indian companies are deploying AI voice agents in production?

Indian BFSI majors (HDFC, ICICI, SBI, Axis), real estate firms, lending NBFCs, and D2C brands are running voice AI in production for sales calls, KYC follow-ups, and customer support. NASSCOM has tracked rapid adoption across IT services and BPO firms (TCS, Infosys, Wipro, HCLTech) building voice AI practices for Indian and global enterprise clients.

What Indian regulations should I consider before deploying voice AI?

DPDP 2023 governs personal data handling in voice interactions, including consent capture and audit trails. RBI guidelines on outsourcing apply to BFSI deployments, IRDAI rules cover insurance workflows, and TRAI's commercial communication rules govern outbound calling. Production-grade voice AI platforms maintain call recordings, language logs, and consent receipts to meet these requirements.

Want to see how UnleashX AI Employees can transform your business? Visit UnleashX to explore the full platform and book a personalized demo.