Introduction

AI voice technology is no longer experimental but most deployments still fail. Enterprises across sales, customer support, and HR are deploying it at scale, yet teams regularly struggle with the same problem: the voice bot goes live, adoption stalls, and ROI never materializes. The gap between "deploying a voice bot" and "running a voice-powered operation" is where most businesses lose momentum.

According to Gartner, 40% of enterprise applications will feature task-specific AI agents by 2026, up from less than 5% in 2025. Yet 95% of enterprise AI initiatives currently deliver zero measurable return. The difference between success and failure comes down to execution fundamentals.

This article covers 5 best practices for integrating AI voice technology in a way that drives measurable outcomes not just a proof-of-concept that quietly gets shelved after quarter one.

TLDR

- Map voice AI to a specific, high-impact business use case before choosing tools don't lead with technology

- Integrate AI voice with your CRM, ERP, and workflow systems as a connected layer, not a standalone tool

- Build human-in-the-loop oversight from day one to catch errors and handle escalations efficiently

- Prioritize compliance, data security, and multilingual capability for regulated industries and global markets

- Define KPIs before go-live and build a continuous optimization loop so your system improves over time

Best Practice 1: Start with a Use Case Audit, Not a Tool Search

Businesses fail when they lead with the technology instead of the business problem. Voice AI deployed without a defined use case produces noise, not value. The first step is identifying which workflows are voice-ready meaning repetitive, high-volume, and conversational by nature.

Running a Use Case Audit

Map your current customer and internal workflows to identify where delays, drop-offs, or inconsistency occur. Rank them by impact and feasibility. High-ROI use cases include:

- Inbound lead qualification

- Cart abandonment follow-ups

- Appointment scheduling

- First-level HR screening

- Policy renewal reminders

- Payment collection calls

A proper use case audit goes beyond "answer calls faster." Ask: what actions should AI voice trigger after the conversation? CRM updates, follow-up emails, payment links, or interview scheduling. This end-to-end thinking separates isolated voice tasks from complete voice workflows.

Matching Use Cases to Business Goals

Different industries map voice AI to specific outcomes:

- Insurance companies use it for policy renewals and claims intake

- E-commerce businesses deploy it for cart recovery and order tracking

- HR teams use it to screen candidates at scale before human recruiters step in

Take cart abandonment as a concrete example. UnleashX's Sarah re-engages dropped customers through voice, WhatsApp, and chat, guiding them through checkout, sending payment links, and tracking conversion until every lost cart turns into revenue.

Prioritize 1-2 use cases for a pilot rather than automating everything at once. A focused pilot validates assumptions, builds internal confidence, and delivers faster ROI.

The numbers back this up: a Forrester study on Talkdesk CX Cloud found a 208% ROI over three years, with payback in under six months.

Best Practice 2: Integrate AI Voice Deeply with Your Existing Business Stack

An AI voice system that doesn't connect to your CRM, order management, or HR platform operates in a silo. It can hold a conversation, but it can't take action, update records, or pass context to the next step in the workflow.

What Deep Integration Means in Practice

The AI voice layer should:

- Read from and write to your CRM in real time

- Access customer history before a call

- Update deal stages after a call

- Trigger downstream workflows (payment links, interview scheduling, follow-up emails)

- Sync across channels like voice, chat, WhatsApp, and email

Technical Considerations

Key factors include:

- API compatibility - Does the platform support your existing systems?

- Webhook support - Can it trigger actions in real time?

- Latency requirements - High latency breaks conversational flow

- Pre-built connectors vs. custom development - Pre-built saves time and reduces errors

Human conversations naturally flow with pauses of 200-500 milliseconds. Production voice AI agents must maintain 800ms or lower latency to preserve conversational flow. When latency exceeds 800ms, users assume the system didn't hear them and start speaking again, leading to overlap and abandoned interactions.

UnleashX is built around this constraint with sub-700ms latency and 99% uptime, it stays within the response window users expect from a natural conversation.

Integration with CRM and Workflow Tools

The most effective AI voice deployments run through a central orchestration layer that connects 200+ tools. UnleashX AI employees like Peter operate on this model autonomously updating CRM records, sending follow-ups, and managing lead nurturing end-to-end.

The payoff is measurable: customers activating HubSpot integrations with voice platforms see a 42% higher free trial-to-customer conversion rate.

Before full deployment: Test integrations with real data. Run a staged rollout where AI voice handles a defined slice of calls or leads while you monitor integration accuracy and CRM sync reliability.

Best Practice 3: Embed Human-in-the-Loop Oversight from Day One

Human-in-the-loop (HITL) is a best practice, not a fallback. Even the most accurate AI voice systems will encounter edge cases, emotional customers, compliance-sensitive conversations, or ambiguous requests that require human judgment. Building in oversight from the start prevents errors from compounding.

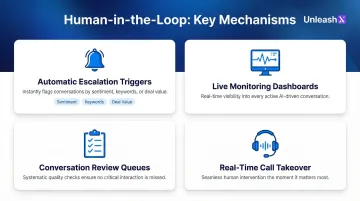

Key HITL Mechanisms

Implement these from the beginning:

- Automatic escalation triggers - Negative sentiment, high-value deal thresholds, complaint keywords

- Live monitoring dashboards - Real-time visibility into active conversations

- Conversation review queues - Systematic quality checks

- Real-time call takeover - Humans can step in without disrupting the customer experience

The Change Management Dimension

Employees often resist AI voice adoption because they fear replacement. Reframe HITL as a collaboration model: AI handles volume and repetition while human agents handle complexity, empathy, and handling exceptions. This framing improves adoption and reduces internal friction.

In fact, 92% of representatives reported higher job satisfaction post-AI adoption, citing fewer mundane tasks and more meaningful customer conversations.

Setting Escalation Rules and Quality Guardrails

Define escalation criteria during setup:

- What conversation types always go to a human?

- What response patterns trigger a review flag?

- How are quality scores tracked over time?

HITL is especially critical in regulated industries like finance (GDPR, audit trails), insurance (IRDAI compliance), and healthcared where AI-generated statements can carry legal and reputational risk if unchecked.

The gap between lab benchmarks and live deployments is real: open-source ASR benchmarks frequently fail to predict production success, with accuracy degradation reaching 5.7x once acoustic conditions and domain jargon enter the picture.

- HITL workflows reduce transcription Word Error Rate (WER) by over 50%, making human review a direct line-item improvement in accuracy

- Production-grade deployments that skip HITL at launch tend to compound errors before teams have enough signal to course-correct

Getting HITL right early sets the foundation for the next challenge: maintaining consistent performance as conversation volume scales.

Best Practice 4: Design for Compliance, Security, and Multilingual Capability

Voice AI systems handle sensitive datad customer PII, financial details, health information so retrofitting security after deployment typically costs more and leaves gaps that auditors will find.

Core Security and Compliance Requirements

- Encrypt all voice data in transit and at rest not just storage backups

- Enforce role-based access controls with exportable audit logs for every interaction

- Define retention and deletion schedules that map to GDPR, IRDAI, or your applicable framework

- Confirm data residency requirements before signing any vendor contract

Under GDPR, voice recognition amounts to processing biometric data, requiring explicit consent. In 2025, 99.4% of CISOs experienced at least one SaaS or AI ecosystem security incident.

Compliance infrastructure and language reach are closely linked, a system that captures consent in English but operates in Hindi still has a compliance gap. UnleashX addresses this with 100% compliance monitoring across IRDAI, GDPR, and internal audit protocols, making every interaction fully traceable.

Supporting Regional Languages and Local Accents

Businesses operating in India, Southeast Asia, or multilingual markets lose significant reach when their AI voice system only handles English. In India, over 85% of the population is not fluent in English, and e-commerce platforms incorporating multilingual voice search observe 40-60% higher conversion rates among regional language users.

Voice AI platforms should support regional languages and local accents natively not just translation overlays. UnleashX supports 100+ global languages and 12+ Indian languages including Hindi, Tamil, Bangla, and 9+ other Indian vernaculars with native-language models rather than translation layers.

Auditing a Vendor for Compliance Readiness

Ask these questions:

- Where is data stored (data residency)?

- Are audit logs available and exportable?

- How is consent managed and documented?

- What are uptime SLAs and incident response procedures?

The answers to these questions determine whether a vendor is genuinely compliance-ready or just checkbox-compliant on paper.

Best Practice 5: Define KPIs Before Go-Live and Optimize Continuously

Most AI voice integrations underperform not because the technology fails, but because success was never defined. Before deploying, establish baseline metrics for the workflows being automated and set target KPIs.

KPI Categories That Matter for AI Voice

Operational Efficiency:

- Average handle time (AHT)

- First-call resolution rate

- Deflection rate

- Agent escalation rate

Revenue Impact:

- Conversion rate

- Pipeline contribution

- Cost per qualified lead

Experience Quality:

- Customer satisfaction scores (CSAT)

- Sentiment trends

Industry benchmarks show significant improvements with voice AI:

| KPI | Human-Only Baseline | Voice AI Benchmark | Improvement |

|---|---|---|---|

| Average Handle Time | 7.5 minutes | 3.6-4.8 minutes | 36-52% reduction |

| First Call Resolution | 70-75% | 82-90% | +12-20 points |

| Cost Per Contact | $6.50-$9.00 | $0.80-$2.50 | 70-85% reduction |

| Abandonment Rate | 8-12% | 1.5-3% | 62-81% reduction |

Source: Voice AI Contact Center KPIs

These benchmarks are achievable but only if teams track the right metrics from day one. In production deployments, UnleashX customers have hit 57% faster follow-ups and 2.5x lead conversion by tying AI configurations directly to pre-defined KPI targets.

Build a Review Cadence That Drives Real Improvement

AI voice models should be regularly retrained on:

- New conversation data

- Accent variations

- Product or policy updates

Set a review cadence (monthly or quarterly) to assess KPI trends and adjust scripts, escalation rules, and integration workflows accordingly. Teams that scale successfully treat this as an ongoing function someone owns the KPIs, reviews the conversation data, and has authority to push changes before volume deployment compounds any gaps.

Frequently Asked Questions

How do AI voice agents handle Hindi and regional languages for Indian customers?

Modern voice AI platforms support natural conversations in Hindi, Tamil, Telugu, Kannada, Marathi, Bengali, and code-mixed Hinglish. UnleashX voice agents detect caller language automatically, switch mid-call where needed, and integrate with WhatsApp for follow-ups, which matches how Indian buyers in BFSI, real estate, and D2C actually engage.

What are the best practices for integrating AI with ERP systems?

Ensure API compatibility and use platforms with pre-built ERP connectors to avoid hidden integration costs typically 3–5x higher with DIY approaches. Test data sync accuracy in a controlled environment and define data governance rules before go-live.

What is the difference between an AI voice bot and a full-stack AI voice employee?

Basic voice bots handle single-task, script-driven interactions. Full-stack AI voice employees orchestrate end-to-end workflows taking action, updating systems, and following up autonomously across channels with 200+ tool integrations.

How do you ensure data privacy and compliance when using AI voice technology?

Implement end-to-end encryption, access controls, and data retention policies. Choose a vendor aligned with relevant regulatory frameworks like GDPR or IRDAI, and maintain audit logs for compliance verification.

Which Indian companies are deploying AI voice agents in production?

Indian BFSI majors (HDFC, ICICI, SBI, Axis), real estate firms, lending NBFCs, and D2C brands are running voice AI in production for sales calls, KYC follow-ups, and customer support. NASSCOM has tracked rapid adoption across IT services and BPO firms (TCS, Infosys, Wipro, HCLTech) building voice AI practices for Indian and global enterprise clients.

What Indian regulations should I consider before deploying voice AI?

DPDP 2023 governs personal data handling in voice interactions, including consent capture and audit trails. RBI guidelines on outsourcing apply to BFSI deployments, IRDAI rules cover insurance workflows, and TRAI's commercial communication rules govern outbound calling. Production-grade voice AI platforms maintain call recordings, language logs, and consent receipts to meet these requirements.

Want to see how UnleashX AI Employees can transform your business? Visit UnleashX to explore the full platform and book a personalized demo.